Last week Jesus and Mo talked to the barmaid about freedom of speech.

That kind of reminds me of what was going on last week at the blog network I used to belong to. I’m sure it’s totally a coincidence though. Totally.

Last week Jesus and Mo talked to the barmaid about freedom of speech.

That kind of reminds me of what was going on last week at the blog network I used to belong to. I’m sure it’s totally a coincidence though. Totally.

I have an email from the Human Rights Campaign. They tell a story about a gay man in Nebraska who was passive-aggressively fired from his part-time job at a wine store after his boyfriend came to visit. They want more stories to share.

Stories like Luke’s remind me why we cannot stop fighting.

They are the backbone of HRC’s mission. They are the reason that we are relentlessly advocating to pass comprehensive non-discrimination legislation. And they are also how we change hearts and minds, and inspire others to become advocates for equality.

If you have faced discrimination based on your sexual orientation or gender identity, or if you know someone who has, we want to know about it. Please take a minute to share your story with HRC. We promise to do everything in our power to support you and ensure that it NEVER happens again.

I’m wondering what they mean by “gender identity” there. I’m guessing it refers to trans people? The orientation part is for lesbians and gays, and the gender identity part is for trans people?

But then, does that mean that only trans people have gender identity? What is gender identity, exactly?

The terminology seems to be changing quite fast, and the penalty for getting it wrong can be ferocious. This can make things tricky.

Callimachi’s Times article goes on to describe the way IS has developed a whole theology around its enslavement and marketing of Yazidi women and girls.

The trade in Yazidi women and girls has created a persistent infrastructure, with a network of warehouses where the victims are held, viewing rooms where they are inspected and marketed, and a dedicated fleet of buses used to transport them.

A thriving slave trade, selling enslaved girls and women for permanent rape.

A total of 5,270 Yazidis were abducted last year, and at least 3,144 are still being held, according to community leaders. To handle them, the Islamic State has developed a detailed bureaucracy of sex slavery, including sales contracts notarized by the ISIS-run Islamic courts. And the practice has become an established recruiting tool to lure men from deeply conservative Muslim societies, where casual sex is taboo and dating is forbidden.

Yay! Run off to the fascist theocracy so that you can fuck like a weasel and murder people wholesale. Win win!

“Every time that he came to rape me, he would pray,” said F, a 15-year-old girl who was captured on the shoulder of Mount Sinjar one year ago and was sold to an Iraqi fighter in his 20s…

“He kept telling me this is ibadah,” she said, using a term from Islamic scripture meaning worship.

“He said that raping me is his prayer to God. I said to him, ‘What you’re doing to me is wrong, and it will not bring you closer to God.’ And he said, ‘No, it’s allowed. It’s halal,’ ” said the teenager, who escaped in April with the help of smugglers after being enslaved for nearly nine months.

It’s halal, and that’s all that counts – it’s “allowed” by the long-dead human man and the non-existent god.

In much the same way as specific Bible passages were used centuries later to support the slave trade in the United States, the Islamic State cites specific verses or stories in the Quran or else in the Sunna, the traditions based on the sayings and deeds of the Prophet Muhammad, to justify their human trafficking, experts say.

Scholars of Islamic theology disagree, however, on the proper interpretation of these verses, and on the divisive question of whether Islam actually sanctions slavery.

Many argue that slavery figures in Islamic scripture in much the same way that it figures in the Bible — as a reflection of the period in antiquity in which the religion was born.

So then the Bible is just a book like any other book, with moral views common to its time, and so is the Quran. There’s nothing special about them.

The use of sex slavery by the Islamic State initially surprised even the group’s most ardent supporters, many of whom sparred with journalists online after the first reports of systematic rape.

The Islamic State’s leadership has repeatedly sought to justify the practice to its internal audience.

After the initial article in Dabiq in October, the issue came up in the publication again this year, in an editorial in May that expressed the writer’s hurt and dismay at the fact that some of the group’s own sympathizers had questioned the institution of slavery.

Aw, that must have stung. Allies can be so disappointing.

“What really alarmed me was that some of the Islamic State’s supporters started denying the matter as if the soldiers of the Khilafah had committed a mistake or evil,” the author wrote. “I write this while the letters drip of pride,’’ he said. “We have indeed raided and captured the kafirahwomen and drove them like sheep by the edge of the sword.”

Yeah, that’s goddy morality for you.

Just about the only prohibition is having sex with a pregnant slave, and the manual describes how an owner must wait for a female captive to have her menstruating cycle, in order to “make sure there is nothing in her womb,” before having intercourse with her. Of the 21 women and girls interviewed for this article, among the only ones who had not been raped were the women who were already pregnant at the moment of their capture, as well as those who were past menopause.

Beyond that, there appears to be no bounds to what is sexually permissible. Child rape is explicitly condoned: “It is permissible to have intercourse with the female slave who hasn’t reached puberty, if she is fit for intercourse,” according to a translation by the Middle East Media Research Institute of a pamphlet published on Twitter last December.

“Fit for intercourse” meaning…her hole is big enough? But some men will rape infants, so size doesn’t seem to matter. Theology can be difficult.

One 34-year-old Yazidi woman, who was bought and repeatedly raped by a Saudi fighter in the Syrian city of Shadadi, described how she fared better than the second slave in the household — a 12-year-old girl who was raped for days on end despite heavy bleeding.

“He destroyed her body. She was badly infected. The fighter kept coming and asking me, ‘Why does she smell so bad?’ And I said, she has an infection on the inside, you need to take care of her,” the woman said.

Unmoved, he ignored the girl’s agony, continuing the ritual of praying before and after raping the child.

“I said to him, ‘She’s just a little girl,’ ” the older woman recalled. “And he answered: ‘No. She’s not a little girl. She’s a slave. And she knows exactly how to have sex.’ ’’

“And having sex with her pleases God,” he said.

Again…what a loathsome dreadful god.

Prepare for extreme disgust as you begin to read this article by Rukmini Callimachi in the NY Times.

QADIYA, Iraq — In the moments before he raped the 12-year-old girl, the Islamic State fighter took the time to explain that what he was about to do was not a sin. Because the preteen girl practiced a religion other than Islam, the Quran not only gave him the right to rape her — it condoned and encouraged it, he insisted.

He bound her hands and gagged her. Then he knelt beside the bed and prostrated himself in prayer before getting on top of her.

When it was over, he knelt to pray again, bookending the rape with acts of religious devotion.

He “explained” to the child that the appalling harm he was about to do to her – physically, emotionally, psychologically – that it was not a “sin” – that is, not a bad thing to do to Allah.

But that’s not the issue. Allah can take care of Allah. (Also Allah doesn’t exist. But I digress.) Allah is beside the point. Raping a child is harm to the child raped, before it’s a harm to anyone else. It’s also a harm to everyone who cares about the child, but it’s a harm to those people because it’s a harm to her. What others are hurt by is the terrible harm done to the child; it harms them because they care about her and have empathy for her. The core harm is the harm to the child. “Sin” is entirely beside the point, unless it’s used in a purely secular sense, which obviously is not the case with Mr Pious here.

It compounds the harm and disgustingness that he told her she had it coming, because she wasn’t a member of his religion. (And what a disgusting religion to be a member of – one that smiles on child-rape of infidels.)

He told her it wasn’t a “sin” and that the Quran is cool with it, and then he bound and gagged her…I suppose he must have realized that despite being told it wasn’t a sin, she would probably struggle and cry and scream, and he wanted her to hold still and shut up so that he could fuck her without distractions.

“I kept telling him it hurts — please stop,” said the girl, whose body is so small an adult could circle her waist with two hands. “He told me that according to Islam he is allowed to rape an unbeliever. He said that by raping me, he is drawing closer to God,” she said in an interview alongside her family in a refugee camp here, to which she escaped after 11 months of captivity.

What a filthy evil malevolent god he prostrates himself to.

From an interview with Cordelia Fine by Anna Lena Phillips in American Scientist around 2010.

What first motivated me to write the book was an experience I had as a parent, rather than as an academic. I read a book which claimed that hardwired sex differences mean that boys and girls should be parented and taught differently. I found this really interesting—but when I looked at the actual studies being used as evidence, I was shocked by the extent to which the neuroscientific findings were being misrepresented. So my initial motivation was simply to alert people to the fact that old-fashioned stereotypes are being dressed up in neuroscientific finery, and to remind people not to be so enthralled with brain imaging that they forget the importance of social factors.

But when I started to look more closely at the scientific literature itself, I was surprised to discover just how little really concrete evidence there is for the idea that there’s such a thing as a “male” brain hardwired to be good at understanding the world, and a “female” brain hardwired to understand people. Instead what I found was a great deal of evidence that our minds are exquisitely attuned to the social environment, and surprisingly sensitive to gender stereotypes. The problem then becomes that these very confident popular claims about “male” brains and “female” brains reinforce gender stereotypes in ways that have self-fulfilling effects on the way we think and behave. And so at that point my aim for the book became to explain this much more complex, and actually much more interesting, picture of the state of the science in a way that would be accessible to everyone. I hope it will help to dispel the belief, encouraged by many popular commentators, that science has shown that hardwired sex differences mean that it’s pointless to hope or strive for greater sex equality.

Now to get more people to pay attention.

Update: The story is from 2011. I blogged it then.

The BBC reports an incredibly depressing situation in the UK.

Britain’s madrassas have faced more than 400 allegations of physical abuse in the past three years, a BBC investigation has discovered.

But only a tiny number have led to successful prosecutions.The revelation has led to calls for formal regulation of the schools, attended by more than 250,000 Muslim children every day for Koran lessons.

That’s a lot of children. And – every day? That’s a lot of time, too. And the “lessons” are just memorization of the Koran in Arabic – they’re about the most futile time-wasting kind of “lessons” it’s possible to have.

And on top of that they’re abused.

BBC Radio 4’s File on 4 asked more than 200 local authorities in England, Scotland and Wales how many allegations of physical and sexual abuse had come to light in the past three years.

One hundred and ninety-one of them agreed to provide information, disclosing a total of 421 cases of physical abuse. But only 10 of those cases went to court, and the BBC was only able to identify two that led to convictions.

421 cases, 10 prosecutions, 2 convictions. 2 out of 421.

Some local authorities said community pressure had led families to withdraw complaints.

In one physical abuse case in Lambeth, two members of staff at a mosque allegedly attacked children with pencils and a phone cable – but the victims later refused to take the case further.

In Lancashire, police added that children as young as six had reported being punched in the back, slapped, kicked and having their hair pulled.

In several cases, pupils said they were hit with sticks or other implements.

All in aid of memorizing a “holy” book in a language they don’t know.

Nazir Afzal, the chief crown prosecutor for the North West of England, said he believed the BBC’s figures represented “a significant underestimate”.

“We have a duty to ensure that people feel confident about coming forward,” he said.

“If there is one victim there will be more, and therefore it is essential for victims to come forward, for parents to support them and for criminal justice practitioners to take these incidents seriously.”

Corporal punishment is legal in religious settings, so long as it does not exceed “reasonable chastisement”.

What? Corporal punishment is legal in religious settings? Why? Why is religion allowed to assault people when no one else is?

What a sick mess.

H/t Gina Khan

Let’s consult the Stanford Encyclopedia of Philosophy for a moment.

Berkeley famously rejected material substance, because he rejected all existence outside the mind. In his early Notebooks, he toyed with the idea of rejecting immaterial substance, because we could have no idea of it, and reducing the self to a collection of the ‘ideas’ that constituted its contents. Finally, he decided that the self, conceived as something over and above the ideas of which it was aware, was essential for an adequate understanding of the human person. Although the self and its acts are not presented to consciousness as objects of awareness, we are obliquely aware of them simply by dint of being active subjects. Hume rejected such claims, and proclaimed the self to be nothing more than a concatenation of its ephemeral contents.

One damn thing after another, with an illusion that they all add up to a single Self.

In fact, Hume criticised the whole conception of substance for lacking in empirical content: when you search for the owner of the properties that make up a substance, you find nothing but further properties. Consequently, the mind is, he claimed, nothing but a ‘bundle’ or ‘heap’ of impressions and ideas—that is, of particular mental states or events, without an owner.

It’s quite a cheerful way of looking at it, because it loosens up the sense of personal investment.

Psychotherapy is also interested.

Neuroscience, social psychology, and artificial intelligence all agree that each of us consists of a multiplicity of identities that account for the richness and complexity of the human experience.

In other words, no one is a “unitary” self. At the same time, there’s more than one way to use this knowledge to elicit therapeutic healing, self-awareness, and growth. This workshop will showcase how two noted psychotherapists bring the concept of multiplicity into their therapeutic work.

- Help clients not over-identify with a single part of themselves, and empower them to move beyond the diagnostic labels they feel define them

If one single part of yourself is giving you the pip, switch your attention to a different one.

It can be hard to sideline an identity if the outside world is intent on tormenting you over it. But when it’s not, and/or when we can escape from the outside world for awhile…we can be a bunch of different selves. We don’t have to nail ourselves to any of them.

It’s happened before. The BBC reported in March 2013:

Sikh weddings are regularly disrupted by protesters opposed to mixed-faith marriages in gurdwaras, a BBC Asian Network investigation has found.

Victims and their families have accused the protesters – who believe non-Sikhs should not be getting married in Sikh temples – of threatening behaviour.

In some cases, protesters have barricaded themselves inside gurdwaras to prevent ceremonies taking place.

Last year the windows of a family’s house in Coventry were smashed.

That happened right before a “mixed” wedding in a nearby gurdwara.

The father of the bride told BBC Asian Network the house was targeted because his daughter was marrying a Hindu in a Sikh temple.

He said: “Some of these people didn’t want the wedding to go ahead. This was the way for them to frighten me.”

The couple ended up having a police escort for the wedding.

In another incident, a bunch of “protesters” locked a couple out of their own wedding in Swindon – the “protesters” inside the gates, the people who wanted to get married outside.

One of the protesters, speaking anonymously to the BBC Asian Network, said: “The last thing I want is to go to a gurdwara and cause trouble. I can say hand on heart that we have never resorted to violence. We don’t want to do this.”

But he said he believed it was hypocritical for a bride or groom to go through a ceremony when they do not truly believe in the Sikh faith.

See there it is again, that ridiculous idea that the “hypocrisy” of a stranger is something he gets to act on, by forcibly preventing people from getting married in the gurdwara. That’s all wrong. It’s not his business. It’s nothing to do with him. He doesn’t get to interfere with it.

There are around 300 gurdwaras in Britain and each is run by elected committees of worshippers.

The rules on the anand karaj, which is the formal name for the Sikh wedding, are set by the religion’s governing body which is based at the Golden Temple in Amritsar, India.

In 2007 it advised gurdwaras the anand karaj should only be between two Sikhs and the protesters say some gurdwara committees are not respecting the faith by allowing non-Sikhs who do not believe in the religion to marry there.

Tough. Get on the committee if you can, try to change policy that way; other than that, it’s none of your business.

The Sikh Council – an umbrella body for Sikh organisations in the UK – has condemned the violence and threats but agrees with the sentiment of the protesters.

The council’s secretary general, Gurmel Singh, said: “I would say there is no place in a modern Britain for any community to resort to violent threatening behaviour.”

But Mr Singh said: “The person getting married has to accept the concept of one god and renounce any other beliefs they may hold which are contrary to that.

“They would also need to understand what the Sikh marriage entails. They would need to adopt (the surname) Singh or Kaur as they are what defines a Sikh. We don’t have legal powers so it is not legally enforceable but it is a social contract a contract of commitment.”

Yes, that’s it right there. It’s not legally enforceable, and thus it’s not enforceable by violent men who invade other people’s weddings, either. You can’t enforce it. Adults don’t get to enforce their rules on other adults that way.

If they’re in charge of the gurdwara, then they can refuse to perform mixed marriages. But other than that – they have to mind their own business.

Dr Piara Singh Bhogal has sat on the committee that runs the Ramgariha gurdwara in Birmingham and he said he shared the protesters’ views on Sikh-only weddings but objects to the way protesters are ruining the most important day of a couple’s life.

“This issue now is becoming quite serious because ceremonies have been disrupted. I am hearing about once a month, sometimes twice a month ceremonies are being disrupted. People are getting scared,” he said.

Less policing, more getting on with one’s own life.

Wow. This is hideous – from the Independent:

A group of men have stormed a Sikh temple in London to stop an inter-faith marriage, forcing the couple to cancel their wedding day.

Members of the Sri Guru Singh Sabha Gurdwara, in Southall, said the final preparations were underway on Friday when the men arrived.

Sohan Singh Sumra, vice-president of the temple, told The Independent a group of up to 22

peoplemen arrived shortly after 8am.“They were all thugs,” he added. “None of them were recognised by any of the Sikh groups here.

“It was because it was a mixed marriage…they just came here to spoil it and intimidate us.”

[amendment mine]

What possible business was it of theirs? Yes I know religious fanatics want all adherents of their religion to be fanatics too, but you can’t always get what you want. Strangers don’t get to decide about other people’s marriages. It’s none of their business. They need to go do something else – embroidery, or making a meal, or a long walk off a short pier.

“I’ve been in this temple since 1994 and I’ve never seen this sort of thing,” Mr Sumra said. “We will always listen to people’s suggestions but there was no reasoning with them. It was a sad day.”

Always? All people? All suggestions? Even impertinent suggestions from total strangers about who should marry whom? I don’t think anyone should listen to suggestions of that kind.

In October last year, the UK’s Sikh Council released guidelines on inter-faith marriages saying that gurdwaras must ensure the “genuine acceptance of Sikh faith” in both partners, proposing the use of signed delarations.

Nope. Gurdwaras must ensure no such thing.

I suppose that gurdwaras, being religious institutions, can decline to perform the marriages of Sikhs to non-Sikhs, if they want to be assholes about it. But they can’t tell people what to do. The Sikh Council, whatever that is, isn’t the same thing as a gurdwara.

A statement released on Wednesday on behalf of the protesters by the Sikh Press Association said the men were conducting a peaceful protest.

“Some gurdwaras in the UK are simply ignoring rulings by Sikh authorities, so protesting is our only option,” protester Jaspal Singh said.

Bollocks. It’s none of your business. Go all the way away and stay there.

“Blocking the wedding is always our last resort…people of all faiths and backgrounds are always welcome in any gurdwara.

“However, it has been made clear the Anand Karaj (marriage ceremony) is specifically for Sikhs.

“We have no grievances with any of the couples, nor any problem with mixed race or inter-faith marriages. Our issue is with those in charge of our gurdwaras.”

It’s none of your business. Go find some business of your own to mind.

Kylie Morris of Channel 4 in Britain visited Ferguson on Tuesday and tried to explain to the British people why white “Oath Keepers” were allowed to openly carry firearms on the street while peaceful black protesters were arrested.

“The men, calling themselves Oath Keepers, only added to the already simmering tensions,” a Channel 4 anchor noted before tossing to Morris for a live report from Ferguson.

Morris told her British audience that the commemoration of the one year anniversary of Michael Brown’s death had been interrupted by armed Oath Keepers, who claimed that they were there to protect businesses and conservative journalists.

“But certainly their presence, their state of wearing uniforms, military-type uniforms — some of them are ex-military, some of them are even serving police, we’re told,” she reported. “They were, you know, carrying weapons.”

Um…before we even get to the racist double standard, let’s just consider this business of men in military-type uniforms walking around with visible guns to “protect” something or other. Isn’t that fascism? Like, fascism in the most literal sense possible? Core fascism, echt fascism, fascism-in-itself? Civilians wearing made-up uniforms parading around with guns? Like the, you know, Brownshirts, aka Stormtroopers? Like Mussolini’s Blackshirts?

Yes, it is. That’s fascism, people. That’s fascism in a suburb of St Louis, Missouri. Meet me in St Louis, Louis, meet me at the gunshow.

Morris noted that black protesters faced pepper spray, arrests and other actions by riot police.

The Oath Keepers, however, “openly carry sidearms and semi-automatic weapons as is their right,” she said.

“You and people who look like you, white males, have the sovereignty to walk around with assault rifles,” one black protesters told a white Oath Keeper, “But we [black protesters] can’t even like stand out here and assemble peacefully and exercise our constitutional right to do so without being gassed, maced and arrested.”

Almost as if black people are the Jews.

I kid. It’s not almost at all; it’s exactly.

Morris said that she had a hard time understanding why police would arrest otherwise peaceful protesters.

“It’s hard to know how this keeps the peace, putting civil rights leaders in handcuffs, including the prominent philosopher Cornel West and Black Lives Matter activist DeRay Mckesson during a peaceful sit-in outside the federal court,” she remarked.

During an interview with Mckesson, she asked if the police appeared to be taking retribution on the protesters for all of the negative attention the Black Lives Matter movement had brought to the area.

“There is a long history of law enforcement targeting people who are fighting for their rights,” Mckesson told Morris. “The reality is that the status quo didn’t just happen overnight, the status quo [developed] over many years and people work hard to reinforce it, including the police.”

Morris noted that there was a “right-wing push back” against the Black Live Matter movement, which she said “feels more and more vociferous every day.”

“There are people whose bread and butter is sustaining racist oppressive systems like for-profit prisons,” Mckesson said. “The right’s response to us is a sign the movement is working.”

It’s also fascism. It’s open, frank, unabashed fascism.

Via Rachel Dwyer @RachelMJDwyer Aug 11

Karol Wojtyla was referred to in Saturday’s Credo column as “the first non-Catholic pope for 450 years”. This should, of course, have read “non-Italian”. We apologise for the error.

No need. I thank you for the error.

Hey it’s World Elephant Day. Twitter told me so.

So let’s have some elephants.

Via Laurent Baheux Photo @laurentbaheux 10h

Via Elephant Family @elephantfamily 5h

Via British Museum @britishmuseum 4h

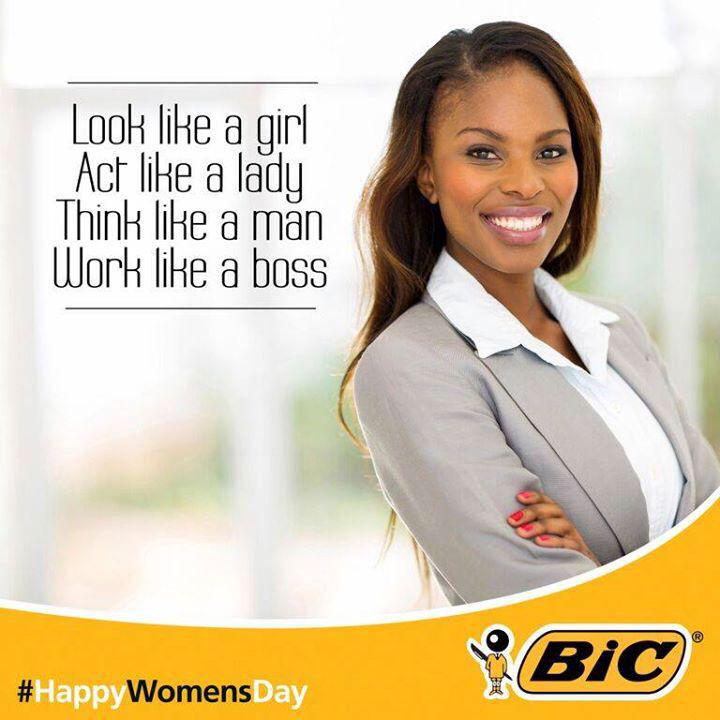

Bic South Africa, much to its surprise, is getting irritated reactions to its so clever and friendly ad in honor of national women’s day. They thought the ad was “empowering.”

Let’s see it again, so that we know where we are.

How is it “empowering” to tell women to look like a girl? How is it “empowering” to tell them to think like a man? How is it “empowering” not to mention the word “woman”? How is it “empowering” to tell women to be like every kind of person except a woman?

How is it “empowering” to tell women they should look childlike? How is it “empowering” to tell women that thinking belongs to men? How is it “empowering” to tell women to act like the prissy refined delicate version of their real selves? How is any of this anything other than insulting?

Among those taking to Twitter to condemn the advert, feminist activist Caroline Criado-Perez, who campaigned for a female face on UK banknotes, tweeted: “What fresh hell is this” and “srsly, ‘think like a man’…*stabs eyes out with bic pen*”.

Commentators were swift to point out it was not the first time the company has faced accusations of sexist marketing. Its pink “for her” pens in 2012 “designed to fit comfortably in a woman’s hand” were derided by, among many others, the US comedian Ellen Degeneres.

So, wiser and more alert after that experience, they –

– oh never mind.

Murdered women in the US. The Huffington Post last October:

At least one third of all female homicide victims in the U.S. are killed by male intimate partners — husbands and ex-husbands, boyfriends and estranged lovers. While both men and women experience domestic violence, the graphics below should put to rest the myth that abuse occurs equally to both sexes.

The graphics illustrate that 85% of victims of domestic violence are women, and that women are far more likely than men to be killed by domestic partners.

Since the landmark Violence Against Women Act was passed in 1994, annual rates of domestic violence have plummeted by 64 percent. But still today, an average of three women are killed every day. More often than not, women are shot. Over half of all women killed by intimate partners between 2001 to 2012 were killed using a gun.

Three women every single day, just in the US.

From Kaofeng Lee at NNEDV, National Network to End Domestic Violence:

During a visit home a few years ago, an old friend of my mother’s dropped by. As we talked, the conversation turned to her poor health. “It’s the stress, you know,” she said. “Stress can take such a toll on your body. I never really recovered after my daughter passed away.”

“What happened?” I asked.

“Well, dear, don’t you remember?” she said. “Her husband beat her to death with a hammer.”

In my shocked silence, she continued, “Oh, it was terrible. He hit her over and over again until she died. My oldest grandson had to leave the army to come home and take care of his younger siblings.”

One of the three for that day.

Today – October 1st – marks the first day of Domestic Violence Awareness Month. This month is a time to mourn those who have lost their lives, celebrate those who have survived, and connect all of us so we can work together to end violence.

The unfortunate fact is that so many of us know someone who has been affected by domestic violence—a friend whose creepy boyfriend we never really liked, or a family member who, years later, reveals harrowing abuse no one ever knew about, or a family friend whose daughter’s tragic murder weighs heavily on her every day of her life.

Three per day.

From the Violence Policy Center in September 2014:

More than 1,700 women were murdered by men in the United States in 2012, and more than 90 percent were killed by someone they knew, according to the new Violence Policy Center (VPC) report When Men Murder Women: An Analysis of 2012 Homicide Data.

That’s about 4.7 per day.

The Violence Policy Center has published When Men Murder Women annually for 17 years. During that period, nationwide the rate of women murdered by men in single victim/single offender incidents has dropped 26 percent — from 1.57 per 100,000 in 1996 to 1.16 per 100,000 in 2012.

However, the rate of women killed by men in the United States remains unacceptably high. A 2002 study from the Harvard School of Public Health found that the United States accounted for 84 percent of all female firearm homicides among 25 high-income countries, while representing only 32 percent of the female population.

The key findings in this year’s release of When Men Murder Women include:

- Nationwide, 1,706 females were murdered by males in single victim/single offender incidents in 2012, at a rate of 1.16 per 100,000.

- For homicides in which the victim to offender relationship could be identified, 93 percent of female victims nationwide were murdered by a male they knew. Of the victims who knew their offenders, 62 percent were wives, common-law wives, ex-wives, or girlfriends of the offenders.

It’s almost as if women are an oppressed class.

The BBC did a backgrounder piece by Naomi Grimley on Amnesty and the decriminalization of sex work yesterday.

It’s not often that a liberal newspaper like The Guardian rails against an organisation like Amnesty International.

But last week the paper ran a stinging editorial questioning the wisdom of the human rights group.

It said Amnesty would make a “serious mistake” if it advocated the decriminalisation of prostitution – a decision the group’s international council will vote on later on Tuesday.

Women’s groups and Jimmy Carter have said similar things.

Amnesty’s leaked proposal says decriminalisation would be “based on the human rights principle that consensual sexual conduct between adults is entitled to protection from state interference” so long as violence or child abuse or other illegal behaviour isn’t involved.

But you could call anything consensual and make it ok that way. Desperate people “consent” to do dangerous work, because they need to survive. Desperate people sometimes even “consent” to selling themselves into slavery, because they need to survive. Desperate people “consent” to living in neighborhoods near toxic landfills and the like. Consent isn’t always completely free.

Germany is one of the countries which liberalised its prostitution laws, together with New Zealand and the Netherlands.

One of the main reasons the Germans opted for legalisation in 2002 was the hope that it would professionalise the industry, giving prostitutes more access to benefits such as health insurance and pensions – just like in any other job.

…

But there are many who argue that the German experiment has gone badly wrong with very few prostitutes registering and being able to claim benefits. Above all, the number one criticism is that it’s boosted sex tourism and fuelled human trafficking to meet the demand of an expanded market.

Figures on human trafficking and its relationship to prostitution are hard to establish. But one academic study looking at 150 countries argued there was a link between relaxed prostitution laws and increased trafficking rates.

Other critics of the German model point to anecdotal evidence of growing numbers of young Romanian and Bulgarian women travelling to Germany to work on the streets or even in mega-brothels.An investigation in 2013 by Der Spiegel described how many of these women head to cities such as Cologne voluntarily but soon end up caught in a dangerous web they can’t easily escape.

But it’s good for the people who make the profit.

Huh. I’ve seen (and probably heard) that “bye Felicia” thing a few times, so this time I decided to look up its origin.

So predictable.

When someone says that they’re leaving and you could really give two shits less that they are. Their name then becomes “felicia”, a random bitch that nobody is sad to see go. They’re real name becomes irrelevant because nobody cares what it really is. Instead, they now are “felicia”.“hey guys i’m gonna go”

“bye felicia”

“who is felicia?”

“exactly bitch. buh bye.”

The entries farther down are a little more informative, but no more alluring.

A line from the 1995 film “Friday” starring Ice Cube and Chris Tucker that is becoming increasingly popular for no reason. Just like twerking, it has been around and well established for many years, but has recently become more mainstream as white girls attempt to use it, most often incorrectly and oblivious to its origin.

That’s fine then, I’m a white hag, so I won’t attempt to use it.

Welp, that’s over.

From the Times again:

LONDON — Amnesty International has approved a controversial policy to endorse the de-criminalization of the sex trade, rejecting complaints by women’s rights groups who say it is tantamount to advocating the legalization of pimping and brothel owning.

Well, women’s rights groups…who cares what they say.

At its decision-making forum in Dublin on Tuesday, the human rights group approved the resolution to recommend “full decriminalization of all aspects of consensual sex work.” It argues its research suggests decriminalization is the best way to defend sex workers’ human rights.

While other people’s research suggests otherwise.

The Coalition Against Trafficking in Women has argued that while it agrees with Amnesty that those who are prostituted should not be criminalized, full de-criminalization would make pimps “businesspeople” who could sell the vulnerable with impunity.

Amnesty’s decision is important because it will lobby governments to accept its point of view.

Oh well, it’s only women.

Now that’s a helpful message…

I found something.

A Facebook group, Let Clothes Be Clothes.

Down with gender-separated clothes.

Let Clothes be Clothes is asking retailers in the UK to rethink how they design and market children’s clothing. Just like many of our supporters, we’re concerned about how colours, styles and themes are split into for girls or for boys, and what message that sends out to children and adults. Children should decide their own interests, favourite colours and wear the styles they find most comfortable and enjoyable to wear.

Please send us your photographs, petition links and blog posts, and help us promote a more positive culture that offers a full range of options to every child, encourages gender equality, prevents bullying and lets children be children.

Please follow us on Twitter @letclothesbe

Sounds like a fine idea to me.